Add Category Column to pandas DataFrame with cut

This post explains how to add a category column to a pandas DataFrame with cut(). cut makes it easy to categorize numerical values in buckets. Let’s look at a a […]

This post explains how to add a category column to a pandas DataFrame with cut(). cut makes it easy to categorize numerical values in buckets. Let’s look at a a […]

This post shows you how to set up conda on your machine and explains why it’s the best way to manage software environments for Dask projects. This blog post says […]

This blog post demonstrates different approaches for splitting a large CSV file into smaller CSV files and outlines the costs / benefits of the different approaches. TL;DR It’s faster to […]

Meetings are the main way to kill your productivity as a creative professional. Two strategically timed meetings can eliminate your makers hours for an entire day. Rejecting meeting invites to […]

This post describes a workflow for self publishing programming books that readers will love. Writing a book seems like a daunting task, but it’s less intimidating if each chapter is […]

This post explains how to read Delta Lakes into pandas DataFrames. The delta-rs library makes this incredibly easy and doesn’t require any Spark dependencies. Let’s look at some simple examples, […]

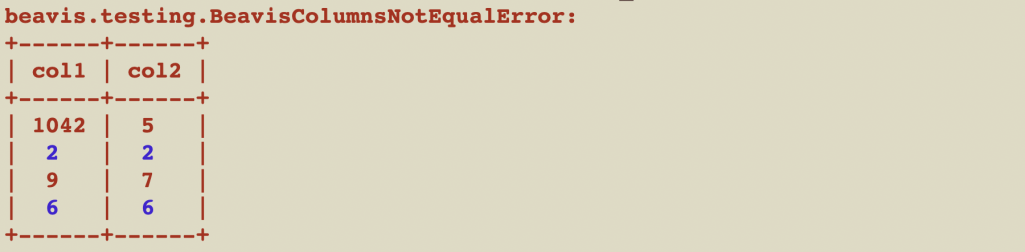

This post explains how to test Pandas code with the built-in test helper methods and with the beavis functions that give more readable error messages. Unit testing helps you write […]

This post explains how to create DataFrames with ArrayType columns and how to perform common data processing operations. Array columns are one of the most useful column types, but they’re […]

This post explains how to define PySpark schemas and when this design pattern is useful. It’ll also explain when defining schemas seems wise, but can actually be safely avoided. Schemas […]

This article explains how to rename a single or multiple columns in a Pandas DataFrame. There are multiple different ways to rename columns and you’ll often want to perform this […]