Expressively Typed Spark Datasets with Frameless

frameless is a great library for writing Datasets with expressive types. The library helps users write correct code with descriptive compile time errors instead of runtime errors with long stack […]

frameless is a great library for writing Datasets with expressive types. The library helps users write correct code with descriptive compile time errors instead of runtime errors with long stack […]

This blog explains how to write out a DataFrame to a single file with Spark. It also describes how to write out data in a file with a specific name, […]

This blog post explains how to filter in Spark and discusses the vital factors to consider when filtering. Poorly executed filtering operations are a common bottleneck in Spark analyses. You […]

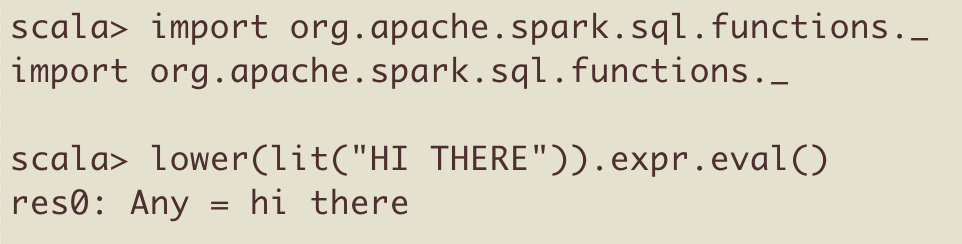

You can execute Spark column functions with a genius combination of expr and eval(). This technique lets you execute Spark functions without having to create a DataFrame. This makes it […]

The term “column equality” refers to two different things in Spark: When a column is equal to a particular value (typically when filtering) When all the values in two columns […]

Spark DataFrame columns support maps, which are great for key / value pairs with an arbitrary length. This blog post describes how to create MapType columns, demonstrates built-in functions to […]

This blog post explains how to import core Spark and Scala libraries like spark-daria into your projects. It’s important for library developers to organize package namespaces so it’s easy for […]

Apache Spark is a big data engine that has quickly become one of the biggest distributed processing frameworks in the world. It’s used by all the big financial institutions and […]

This blog post explains how to use the HyperLogLog algorithm to perform fast count distinct operations. HyperLogLog sketches can be generated with spark-alchemy, loaded into Postgres databases, and queried with […]

Spark writers allow for data to be partitioned on disk with partitionBy. Some queries can run 50 to 100 times faster on a partitioned data lake, so partitioning is vital […]